Background

Gaoding is a multi-scene online design platform focusing on business design, breaking the technical limitations between hardware and software, bringing together creative content and design tools in one, providing quality solutions for design needs in different scenarios, meeting the design needs of all types of media such as images and videos, and making design easier.

We use Elasticsearch (refer to as ES) as the log retrieval component. With the growth of business volume, there are about 2T new data added every day, which needs to be saved for 15~30 days, putting considerable pressure on the disk and system. In ES, in order to ensure the performance of log writing and querying, most of them use high performance cloud disk with higher unit storage cost. However, in the actual business scenario, data that exceeds 7 days is only used for low frequency, and storing it all in high performance cloud disk will definitely lead to excessive cost and waste of space.

Solution

Elasticsearch version 7.10 introduced the concept of index lifecycle and started to support tiered data storage, which allows you to specify different nodes to use different disk media to distinguish between hot and cold data, such as using HDD disks to store warm and cold data, which can get more usage space and lower cost. This feature is ideal for log indexing scenarios.

Using JuiceFS instead of HDD disks for warm and cold data storage media is equivalent to getting unlimited capacity storage space. With ES's index lifecycle management, the entire lifecycle management of index creation - migration - destruction can be done automatically without manual intervention.In practice, the ES cluster is first upgraded to the latest version 7.13. Then, we split the hot and cold nodes, with the hot nodes prioritizing performance and the cold nodes prioritizing storage capacity and cost. At the same time, the index and template approach is adjusted to configure the data lifecycle, index template and data flow to complete the index data writing.

The flow of the entire index after tuning is shown in the following figure.

- Configure

index.routing.allocation.require.box_type:hotfor node filtering when the index is created. - Wait for the index to enter the warm cycle, adjust

index.routing.allocation.requirement.box_type:warmand migrate to the warm node after which the data is stored in the cold node and actually stored in JuiceFS. - Wait for the index to enter the delete cycle, ES will automatically delete the index data.

Benefits

What is JuiceFS used in the solution?

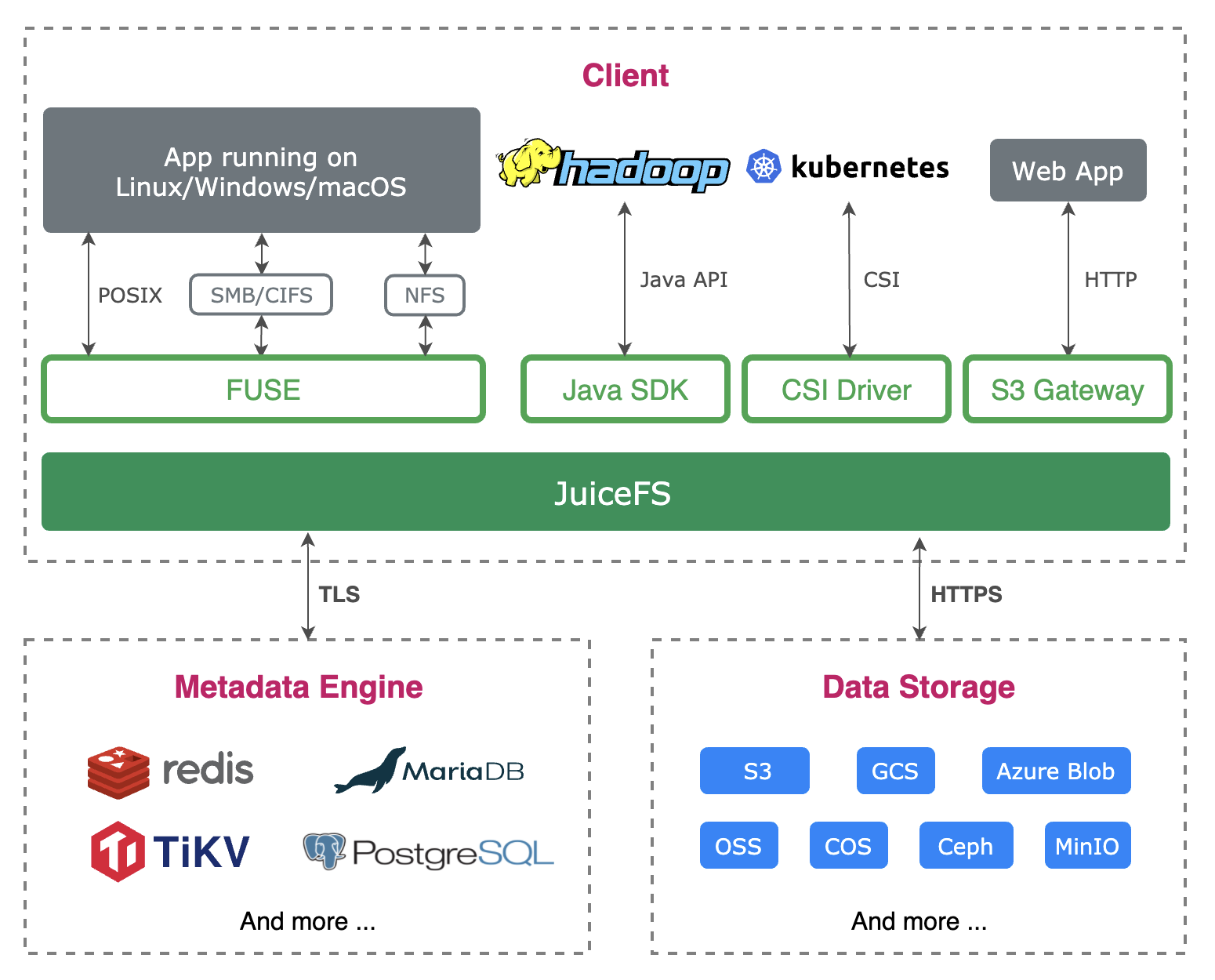

JuiceFS is an enterprise-class distributed file system designed for cloud environments. It offers full POSIX compatibility and provides a low-cost, unlimited space shared file system for applications. Using JuiceFS to store data, the data itself is persisted in object storage (e.g., Amazon S3, AliCloud OSS, etc.), combined with JuiceFS' metadata services to provide high-performance file storage.JuiceFS is available in fully managed public cloud services worldwide and is simple to configure and use. JuiceFS is also open-sourced on GitHub in early 2021 and has received a lot of attention and participation from developers around the world, with 3800+ stars so far.

In this scenario, the ES cluster warm nodes use JuiceFS for storage, so we don't have to do capacity planning and scaling for these nodes, and we don't have to migrate data in case of node failure, which reduces cost and brings great convenience to operation.

The persistence layer of JuiceFS uses object storage and elastic billing, and the TCO is lower than using ordinary cloud disk. The ES cluster has a total capacity of 60TB+, and through hot and cold tiered processing, 75% of the data exists in JuiceFS, saving nearly 60% on storage costs alone. If you add in the time and effort introduced by the operation team, this solution brings at least 70% reduction in TCO for the customer's data storage.

Practice

Cluster configuration

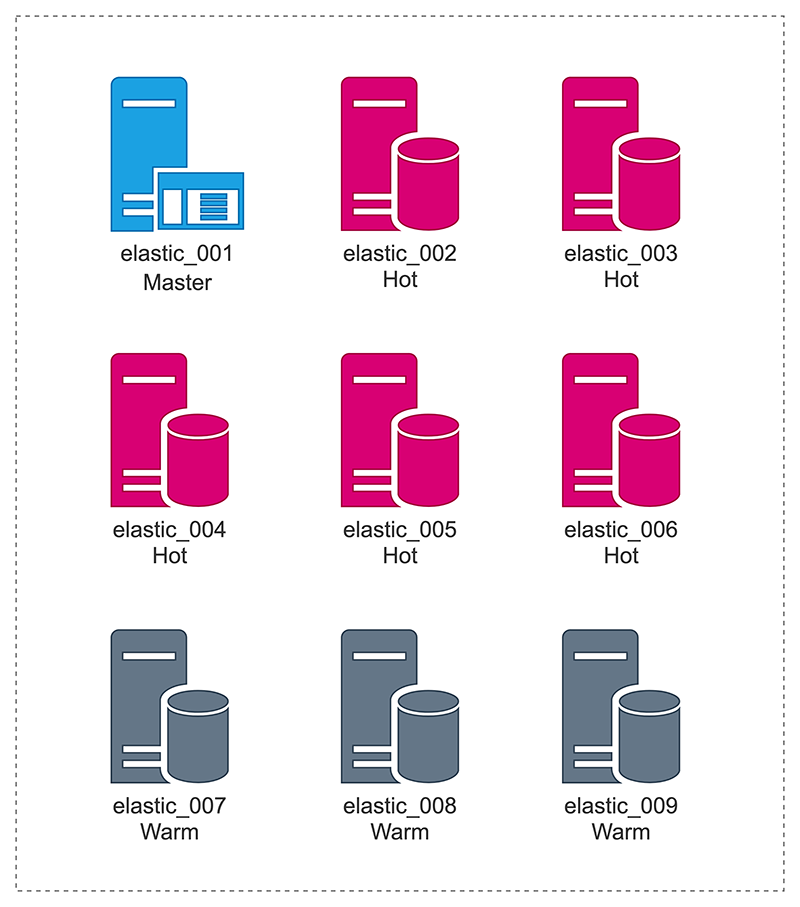

There are 9 nodes in the cluster, a single master node (elastic_001) and 8 data nodes, including 5 hot data nodes (elastic_002 ~ elastic_006) and 3 cold data nodes (elastic_007 ~ elastic_009).

Directory mounting and configuration

JuiceFS is mounted on the ES cold data node and provides the ES data directory.

The node is configured with a 2T data disk, which is mounted in the /data directory. The ES process is started as a container, and the data disk is mounted in the system's /data/elastic directory. Since the container mount system directory is used, the ES data directory (/data/elastic) cannot be pointed to the subdirectory which is a soft link in JuiceFS mount point. Therefore, the bind mount of the Linux system is used here to mount the JuiceFS sub-directory to the path /data/elastic. Just like on node 007:

Note: The following command uses JuiceFS Hosted Service, and the format is slightly different from the community version.

# ./juicefs mount gd-elasticsearch-jfs \

--cache-dir=/data/jfsCache --cache-size=307200 \

--upload-limit=800 /jfs

# mount -o bind /jfs/data-elastic-pro-007 /data/elasticThen you can see the content of /jfs/data-elastic-pro-007 in the directory of /data/elastic.

Similar mounting operations are also done on nodes 008 and 009.

If you are not familiar with JuiceFS's basic operations such as initialization and mounting, please refer to JuiceFS official document.

The local disk directory uses /data/jfsCache/gd-elasticsearch-jfs/rawstaging/, please be careful not to delete any files in this directory, otherwise data loss may occur.

cache-size and upload-limit are used to limit the local read cache size to 300GiB, and the bandwidth of write object storage does not exceed 800Mbps.

Performance Optimization

Reduce node load

Before adopting JuiceFS, the ES cluster lifecycle was configured with Force Merge, specifically with warm.actions.forcemerge.max_num_segments: 1, which causes data to be remerged during Rollover, putting a lot of pressure on the CPU. This step is completely unnecessary, and turning off the Force Merge configuration will avoid unnecessary performance overhead and reduce node load.

Rollover parameter configuration optimization

Since the warm phase data is written to JuiceFS and eventually persisted to the object storage, the application layer does not need to store multiple replicas, so you can set replicas to 0 during index rollover, i.e. warm.actions.number_of_replicas: 0.

In addition, consider that when the index data is migrated to the warm phase, the data is not written anymore, so you can set the index to be read-only in the warm phase, i.e. warm.actions.readonly: {}, and turn off the data writing to the index to reduce memory usage.

Summary

As time goes by and business volume grows, enterprises are bound to face the dual challenges of larger data storage and management. In this case, the lifecycle management capability of Elasticsearch is fully utilized to store log data in a tiered manner according to business needs. The hot data that needs to be used frequently is stored in SSD, while the low-frequency data that needs to be used more than 7 days is stored in JuiceFS, which is more cost-effective, saving 60% of storage cost for customers. At the same time, JuiceFS also provides nearly unlimited elastic space for applications, eliminating a series of operation and maintenance work such as capacity planning, expansion, and data migration, and improving the efficiency of enterprise IT architecture.