Using Apache Ranger

Apache Ranger is a security framework that brings comprehensive security to the Apache Hadoop ecosystem. A central UI is provided to manage security policies on various Hadoop applications like HDFS / Hive.

From 4.8 and above, JuiceFS Hadoop Java SDK supports Ranger (except the Audit functionality, for now).

When Kerberos is not enabled for a Hadoop cluster, JuiceFS will regularly pick a random client to fetch security policies from Ranger Admin, and store as a file in JuiceFS so that all other clients reuse these resource and avoid putting more pressure on Ranger Admin. But if Kerberos is enabled, follow below steps to correctly handle.

-

Preparation

Install Apache Ranger if haven't already.

-

Enable Ranger support in JuiceFS console

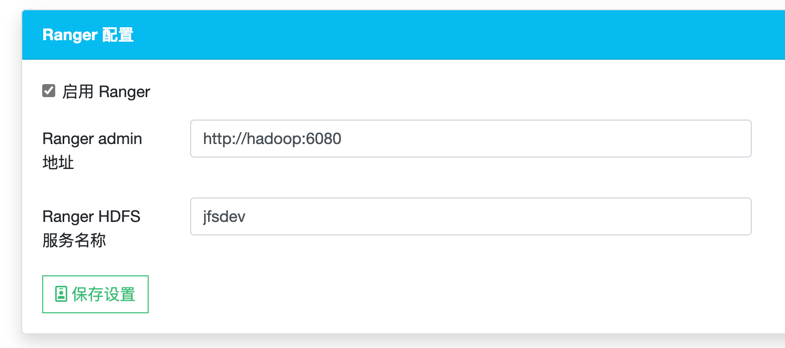

Enable Ranger in the settings page, and provide Ranger Admin address and Ranger HDFS Service Name:

Notice, if the second part of the value

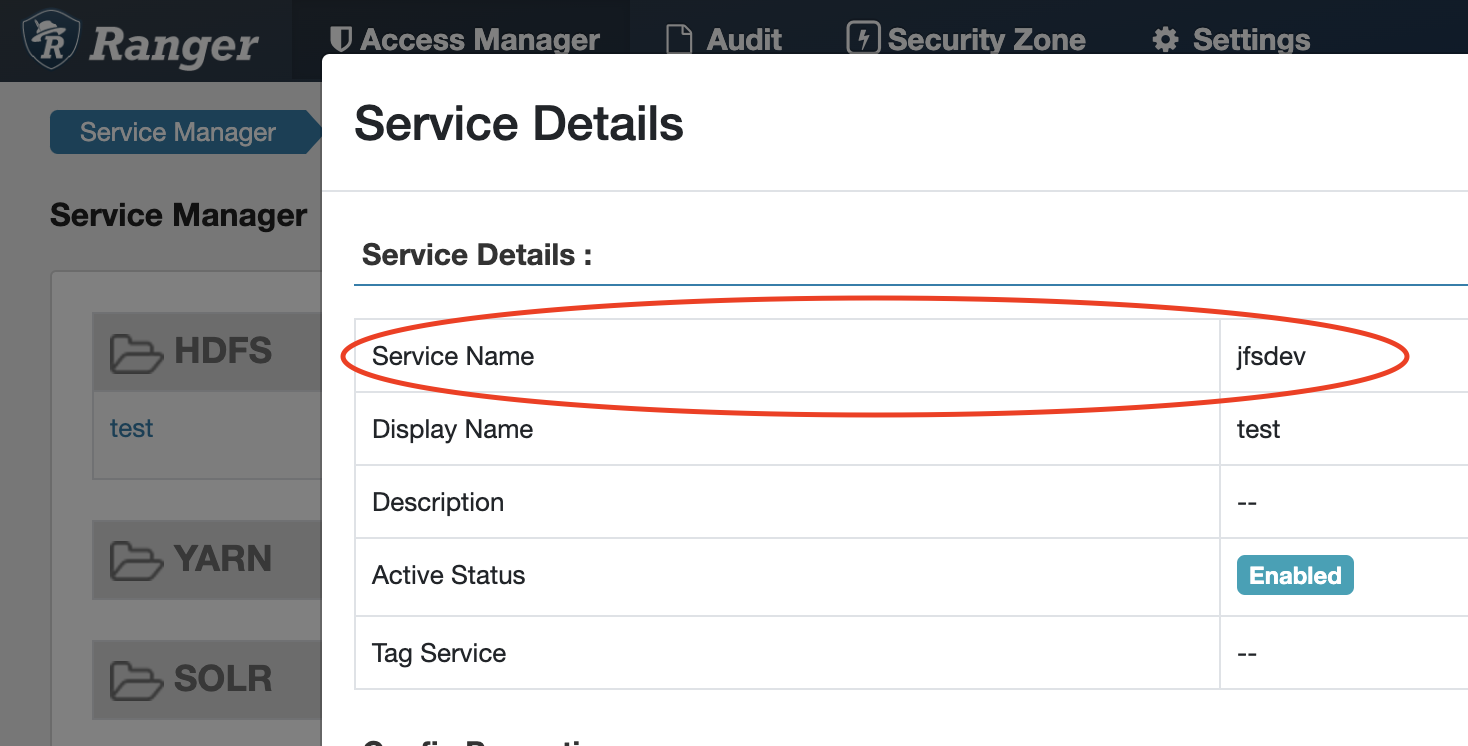

ranger.spnego.kerberos.principalishostname. Then the value of Ranger Admin must behostname. IP address should not be used.You can find Ranger HDFS Service Name in Ranger Admin UI - Service Manager - HDFS Service:

-

Optional: Configure Kerberos

Enabling Kerberos will forbid JuiceFS Clients from fetching security policies from Ranger, you need to configure download permission by setting

policy.download.auth.usersandtag.download.auth.usersthrough Ranger Admin UI - HDFS Service, specify multiple users using comma-separated string. And after that, you need to refresh security policies as a user with download permission.Using below command to fetch security policies and store in JuiceFS (consider setting up a cronjob for it), replace

{PRINCIPAL}with one ofpolicy.download.auth.users.hadoop jar juicefs-hadoop.jar com.juicefs.Main \

ranger \

--fs jfs://{VOL_NAME}/ \

--keytab /path/to/keytab \

--principal {PRINCIPAL}

Verify

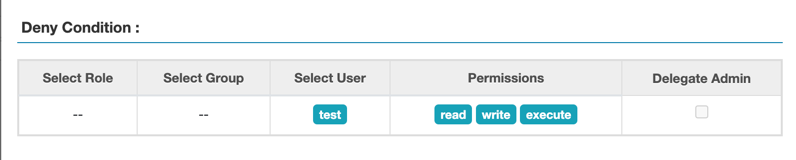

In Ranger Admin UI, add a Deny Condition Policy for test user to Resource Path /:

Run below commands to see if our newly added policy works:

# non-kerberos environment

HADOOP_USER_NAME=test hadoop fs -ls jfs://vol/

ls: Permission denied: user=test, access=READ_EXECUTE, path="/"

# kerberos environment

kinit test

hadoop fs -ls jfs://vol/

ls: Permission denied: user=test, access=READ_EXECUTE, path="/"