Set up object storage

Access Key and Secret Key

If your cloud service provider supports configuring bucket access policy for virtual machines, and achieve access to object storage without credentials (like AWS IAM), you can omit those keys during juicefs auth or juicefs mount (provide empty value), see juicefs auth for details.

For users that prefer ease of use, we recommend granting full read/write and CreateBucket permission to the API keys, so that JuiceFS Client can help you create the bucket on the first successful mount.

But for environments that comes with strict security policies, you can further limit object storage permissions:

- For read/write client or mount point, the minimum permission requirements are

HeadObject|GetObject|PutObject|DeleteObject. You can restrict access to the corresponding bucket (juicefs-<VOL_NAME>by default), under the file system prefix path (which is the file system name by default). When running with minimum permissions, JuiceFS will not be able to create object storage bucket for you, please create them manually in advance. - If you need to run

juicefs gcto scan for potential object leaks,ListObjectsis required. - If you need to run

juicefs fsckto scan for corrupted files,HeadObjectis needed. - If you need to use

juicefs import, object storage replication, orjuicefs sync, thenListObjectsandHeadObjectis required as well. - If you need to use the Convert feature,

UploadPartCopyis required. - For read-only clients, you can limit object storage permission to read-only, however, it is also recommended to change the file system access token to read-only as well, because if not,

juicefs authruns a PUT test against the associated bucket and the failure will break the mount procedure. When file system token is set to read-only, this test is skipped automatically. You can also set theJFS_NO_CHECK_OBJECT_STORAGE=1environment variable before running JuiceFS commands, to skip this check.

Common object storage

Amazon S3

Refer to "AWS security credentials". Moreover, if you have used an IAM role to grant permissions to applications running on Amazon EC2 instances, you can omit credentials during juicefs mount.

A bucket policy example:

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"s3:DeleteObject",

"s3:GetObject",

"s3:HeadObject",

"s3:PutObject"

],

"Effect": "Allow",

"Resource": [

"arn:aws:s3:::juicefs-example/example/*"

]

}

]

}

Google Cloud Storage

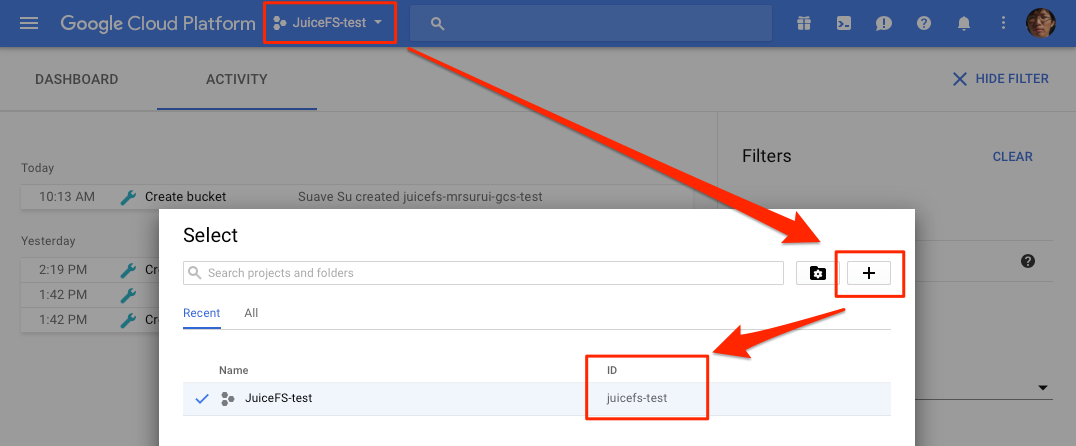

First, you should create a project in the console of Google Cloud Platform, remember your Project ID:

Download and install Cloud SDK:

curl https://sdk.cloud.google.com | bash

Run the following command after installation:

gcloud auth application-default login

Congratulation, you have done the authentication job that would be executed only once.

Finally, you could run juicefs mount to mount your JuiceFS file system, the Project ID will be requested (you could set it as GOOGLE_CLOUD_PROJECT in environment variable).

When you mount a file system with sudo, you also should run gcloud auth with sudo. Otherwise, the JuiceFS may not load the credential.

If JuiceFS is used inside Compute Engine, it's recommended to grant the virtual machines full access to Storage API.

Azure Blob Storage

Currently, service is only available at Microsoft Azure Chinese Region, contact us and other regions can be supported.

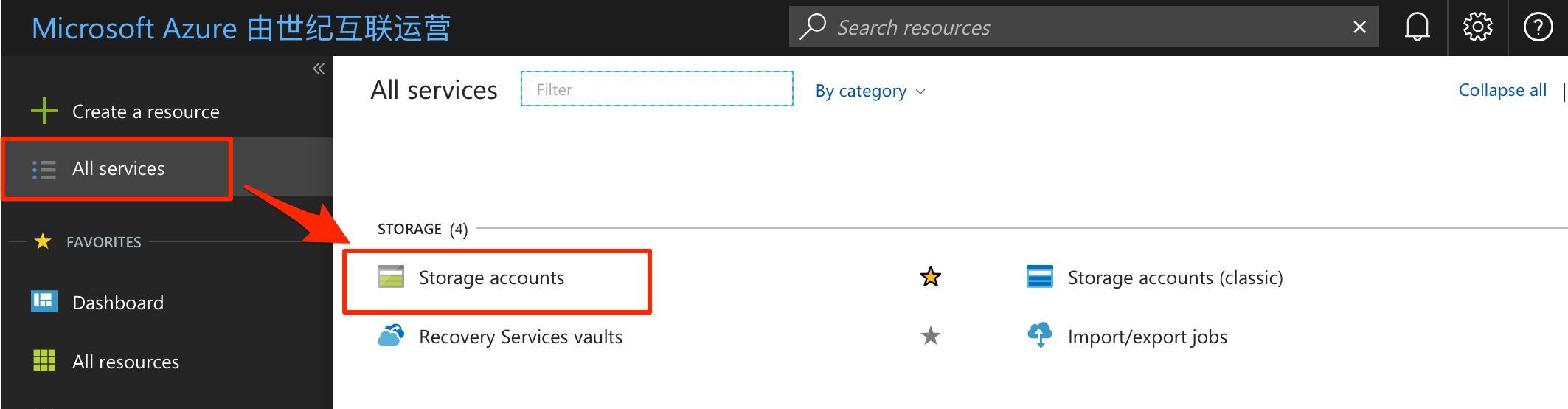

When the JuiceFS use the Azure Blob Storage as the underlying storage, you should create a storage account. Find Storage Accounts from the navigation of left panel.

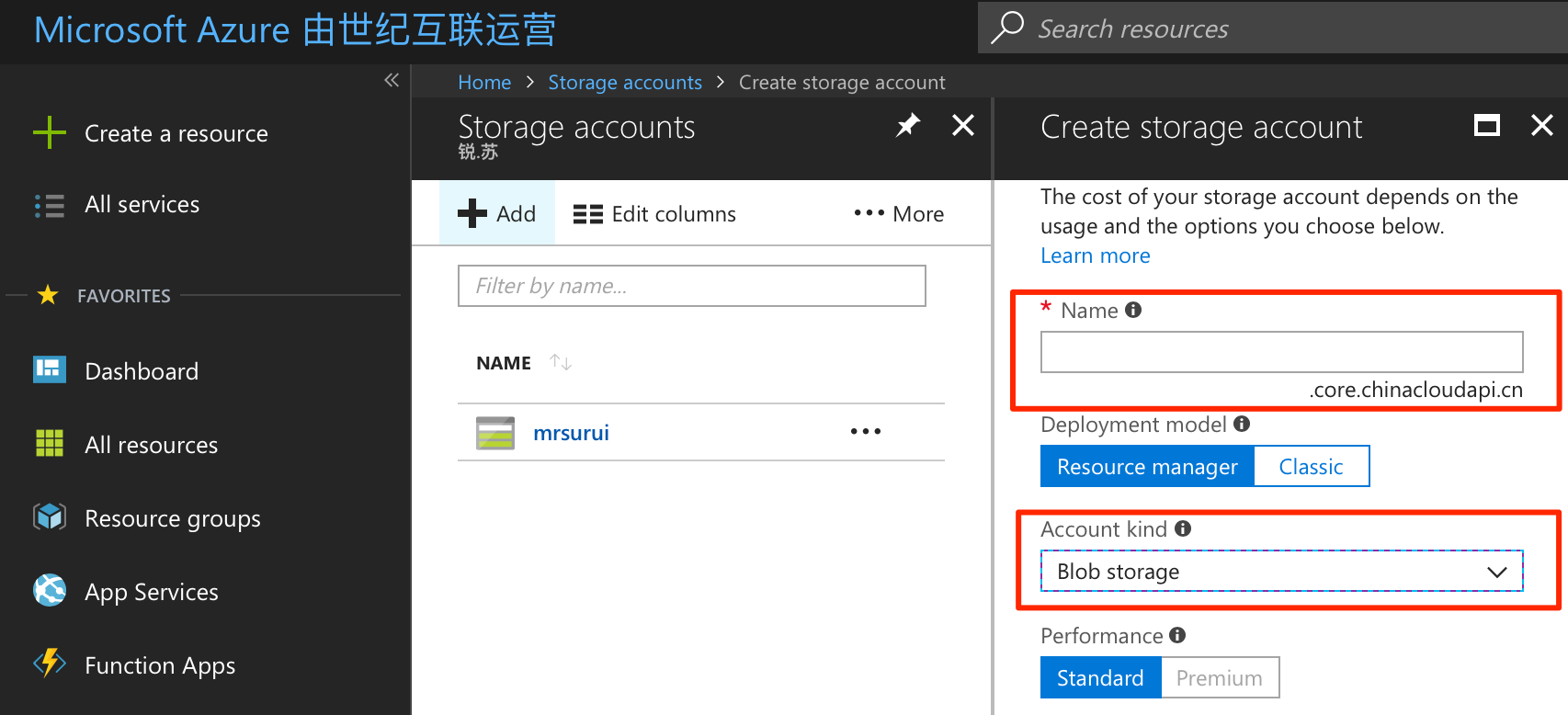

Create a new account in Storage accounts, the name will be requested at mounting the JuiceFS file system, the account kind should be "Blob storage".

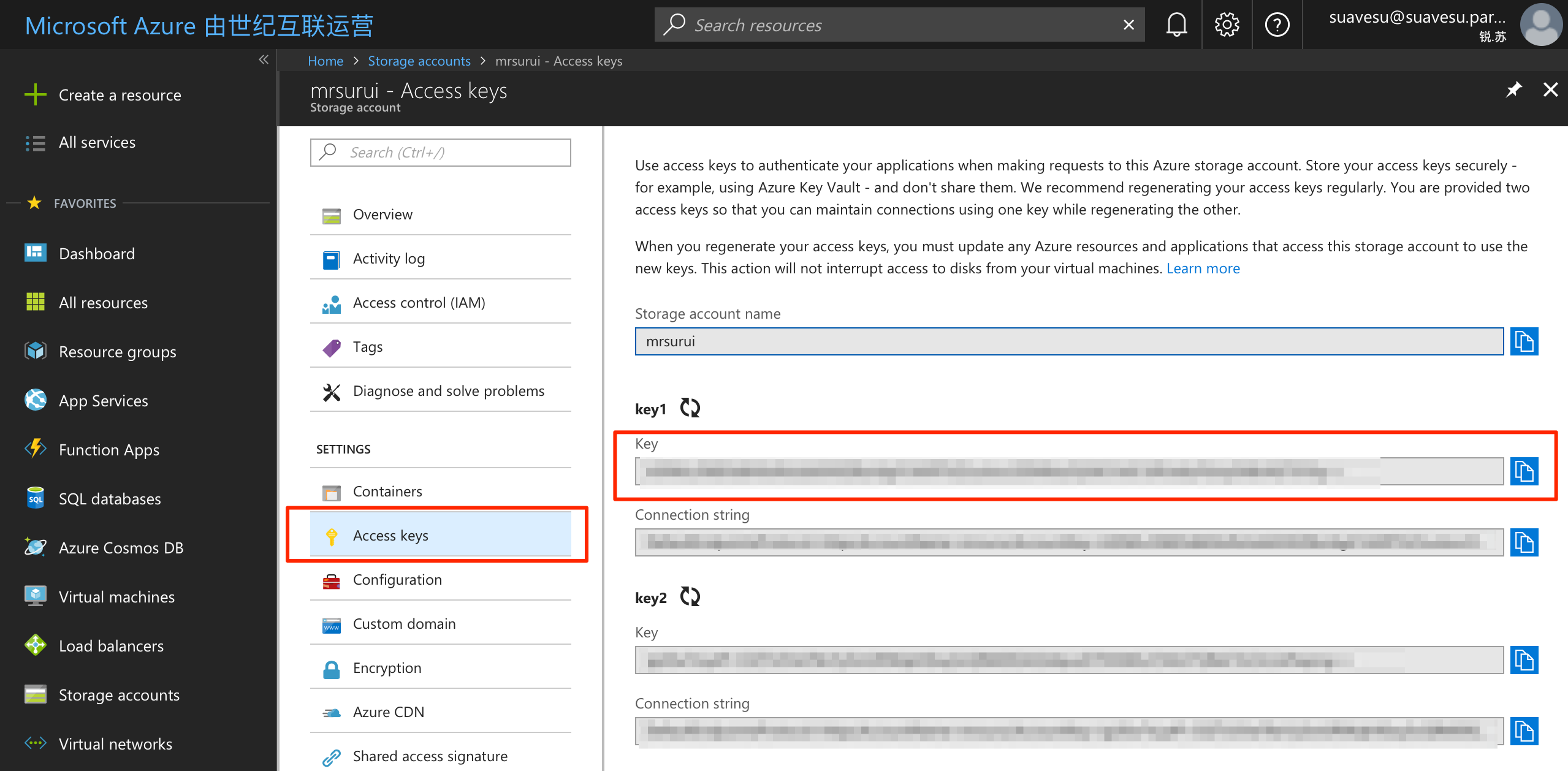

Enter the Access key from your storage account, there're two keys available.

Backblaze B2

Create an application key with read and write permission on Application Keys.

The master application key is required to create a bucket by JuiceFS. It's recommended to create a bucket manually, using a name like juicefs-NAME, then create an application key with read-write access for JuiceFS.

IBM Cloud Object Storage

It requires API Key and Resource Instance ID to access Cloud Object Storage, refer to Retrieving your instance ID.

DigitalOcean Spaces

Refer to How To Create a DigitalOcean Space and API Key.

Wasabi

Refer to Creating a Root Access Key and Secret Key.

Alibaba Cloud OSS

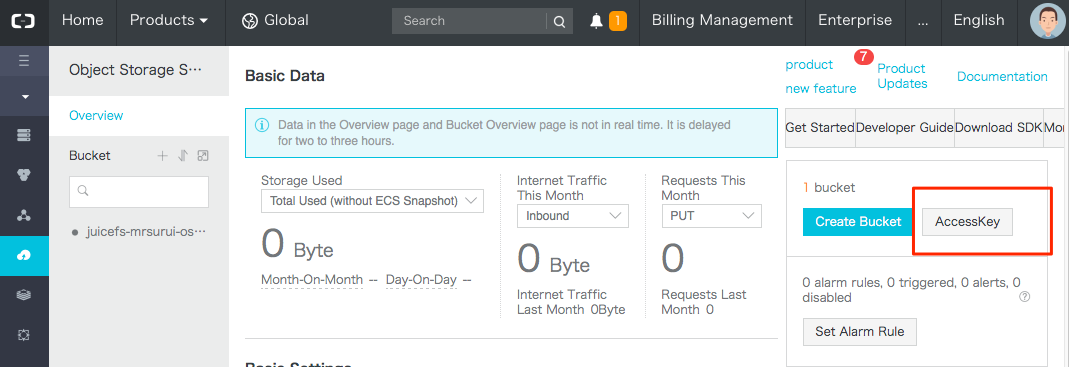

Obtain Access Key in the object storage console:

Create a key for JuiceFS mount:

A bucket policy example:

{

"Statement": [

{

"Action": [

"oss:DeleteObject",

"oss:GetObject",

"oss:HeadObject",

"oss:PutObject"

],

"Effect": "Allow",

"Resource": [

"acs:oss:*:*:juicefs-example/example/*"

]

}

],

"Version": "1"

}

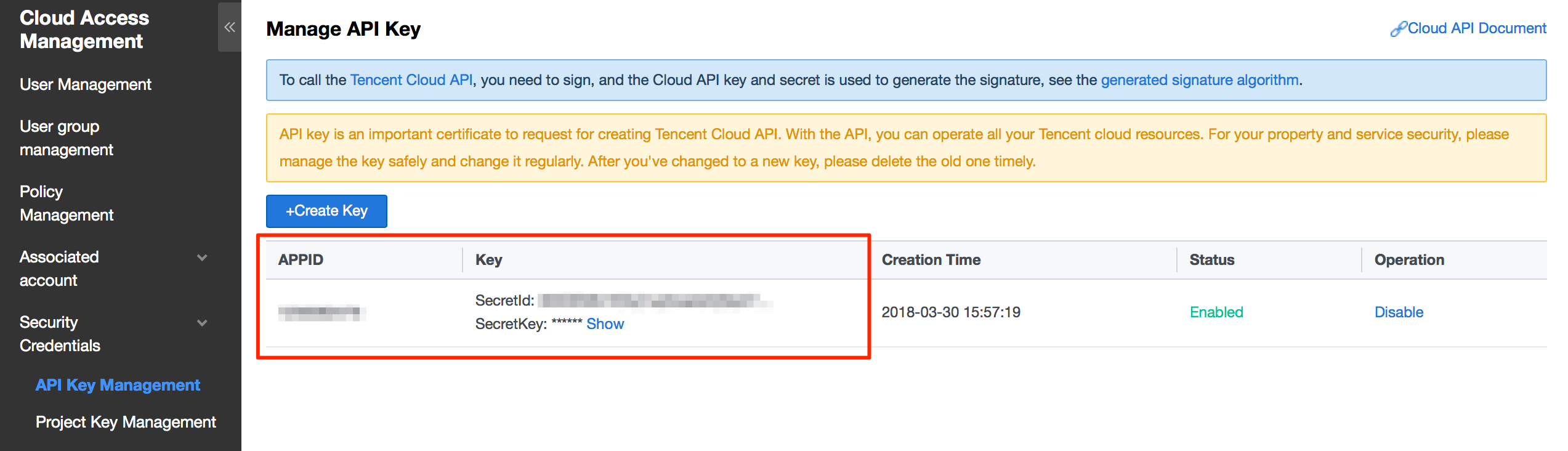

Tencent Cloud COS

When using Tencent Cloud COS, mounting JuiceFS requires a Tencent APPID in addition, so we recommend fill in the APPID into Bucket when creating the file system, using format {bucket}-{APPID}. If you didn't specify an APPID when creating the file system, JuiceFS will ask for APPID interactively during mount. Moreover, you can specify APPID in juicefs auth, by the --bucket parameter, using the same format {bucket}-{APPID}.

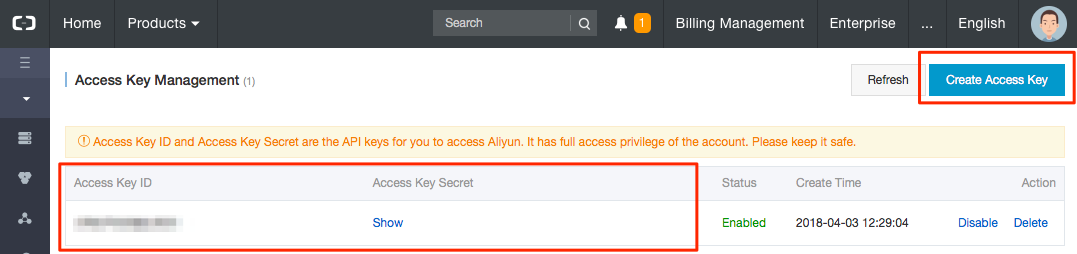

APPID is in the Account Info.

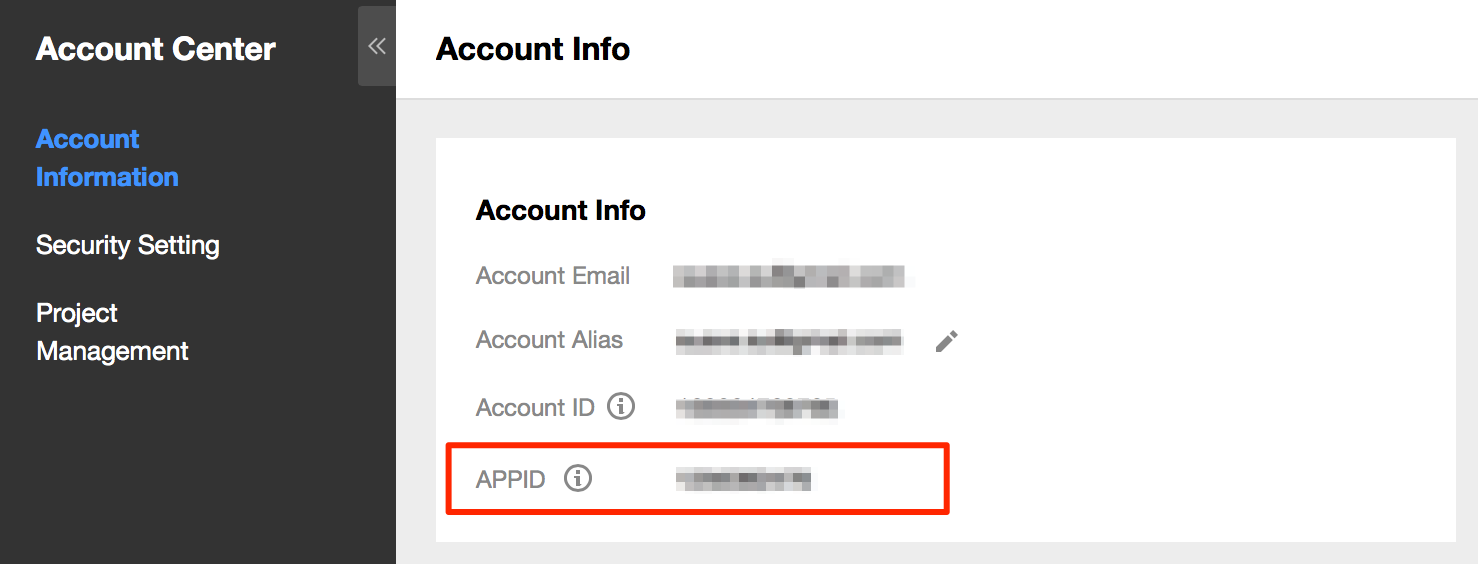

Secret ID and Secret Key are managed in the API Key Management, you need to create a pair if it's empty.

A bucket policy example:

{

"Statement": [

{

"Effect": "Allow",

"Action": [

"cos:DeleteObject",

"cos:GetObject",

"cos:HeadObject",

"cos:PutObject"

],

"Resource": [

"qcs::cos:ap-guangzhou:uid/1250000000:juicefs-example-1250000000/example/*"

]

}

],

"Version": "2.0"

}

Huawei Cloud OBS

Refer to How Do I Manage Access Keys?.

Baidu Cloud BOS

Login the Baidu Cloud Console, enter the Security Authentication in the dropdown menu of the account at the right-upper corner of the page.

Kingsoft Cloud KS3

Refer to User Access Key Management.

QingCloud QingStor

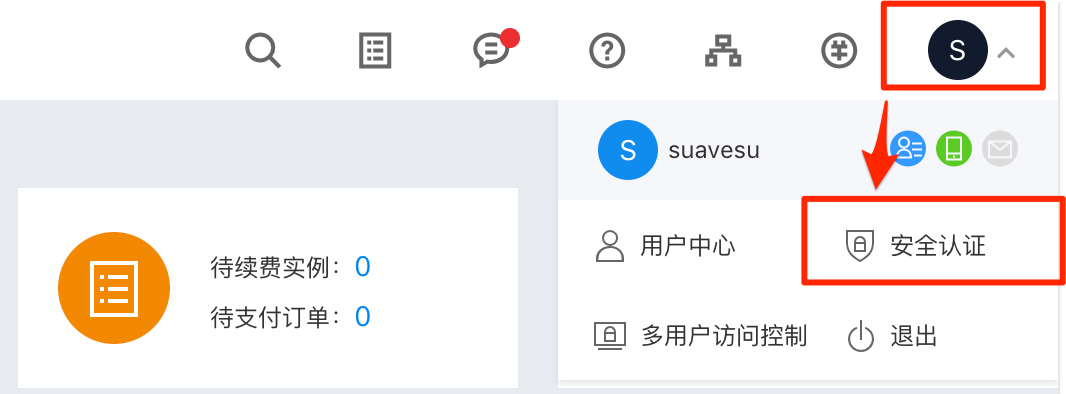

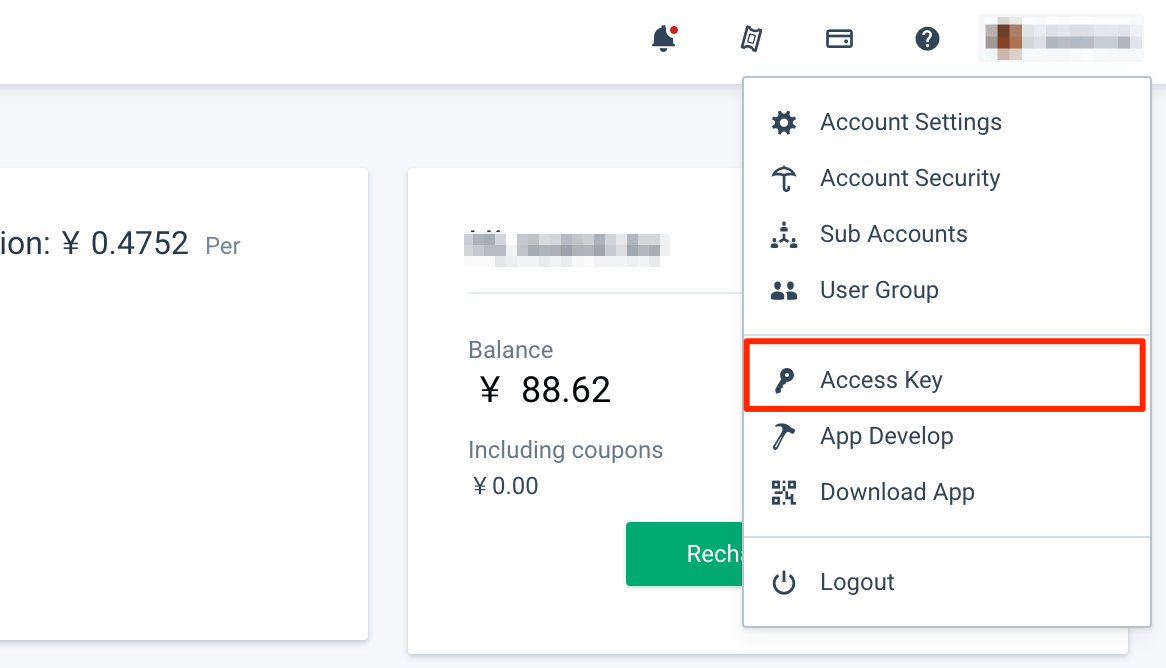

Login the Qingcloud Console, you'll find Access Keys in the dropdown menu of your account at the right corner.

Qiniu Kodo

Refer to How to get Access Key and Secret Key.

UCloud US3

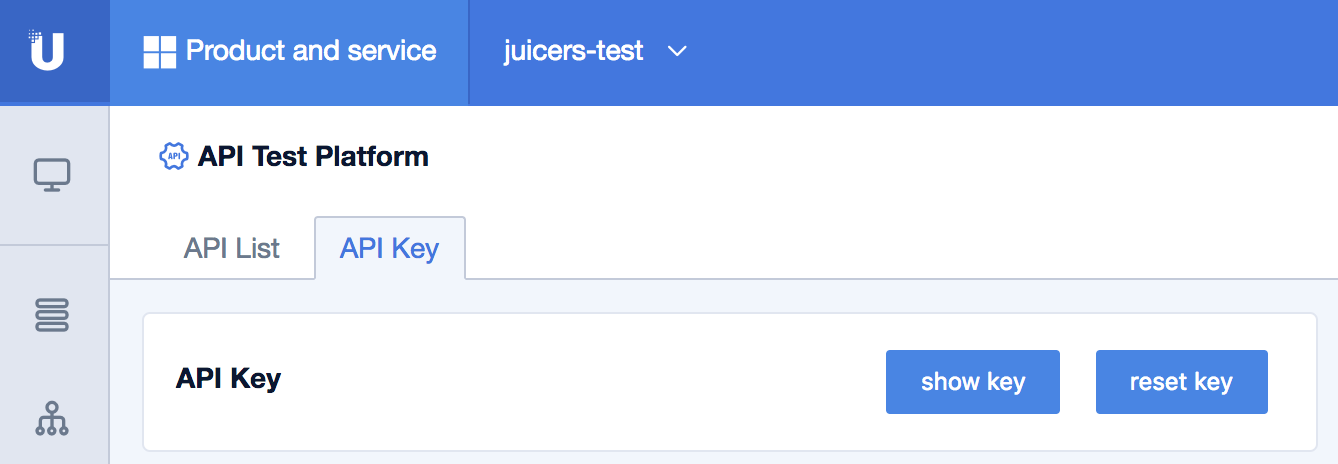

Login the UCloud console, you'll find your API key in UAPI in the Monitoring management of Product and service.

Ceph

Ceph provides two sets of APIs: RADOS and RGW. RADOS is the underlying protocol provided by Ceph, while RGW is a S3 gateway, exposing standard S3 APIs. Connecting via RADOS is recommended as it bypasses RGW and achieves better latency. If you decide to use Ceph via S3, use it like any other S3 object storage services.

If the RADOS client protocol is used, JuiceFS uses librados2, which supports Ceph >= 12.2. You'll need to provide the cluster name (e.g. ceph), and a user name (e.g. client.admin).

Other object storage (not directly supported)

JuiceFS supports all vendors that provide virtual machine hosting and object storage services. If your preferred vendor is not listed in our Cloud Service or the desired region is not supported yet, consult a Juicedata engineer. Deployment can typically be completed within 48 hours. However, certain cases may not be directly supported by JuiceFS. They are covered in the following sections.

Cloudflare R2 (and other S3-compatible object storage)

Cloudflare R2 ListObjects API is not fully S3 compatible (the result list is not sorted), so some features of JuiceFS do not work, for example, juicefs gc, juicefs fsck, juicefs sync, and juicefs destroy.

Cloudflare does not host virtual machines, so it is not included in our list of supported providers. However, since R2 supports the S3 API, you are still able to use it with a JuiceFS file system. Not just R2, any object storage that implements the S3 API can be a valid JuiceFS backend. We will use R2 as an example to demonstrate the process:

- When creating a file system from our web console, specify the object storage provider and region under Object Storage Region. Since R2 will not be listed, select AWS and choose the region that offers optimal latency between your application hosts (typically the closest geographic region). This will configure the file system's storage protocol as S3, but instead of using AWS S3 directly, the R2 S3 API will be used.

- Fill in the complete object storage endpoint in the Bucket field, for example,

https://xxx.r2.cloudflarestorage.com/dev. - Go to the Settings tab and follow the tutorial to install the JuiceFS Client on your server.

- Prepare the R2 credentials and follow Quick Start to mount the file system.

Self-hosted object storage

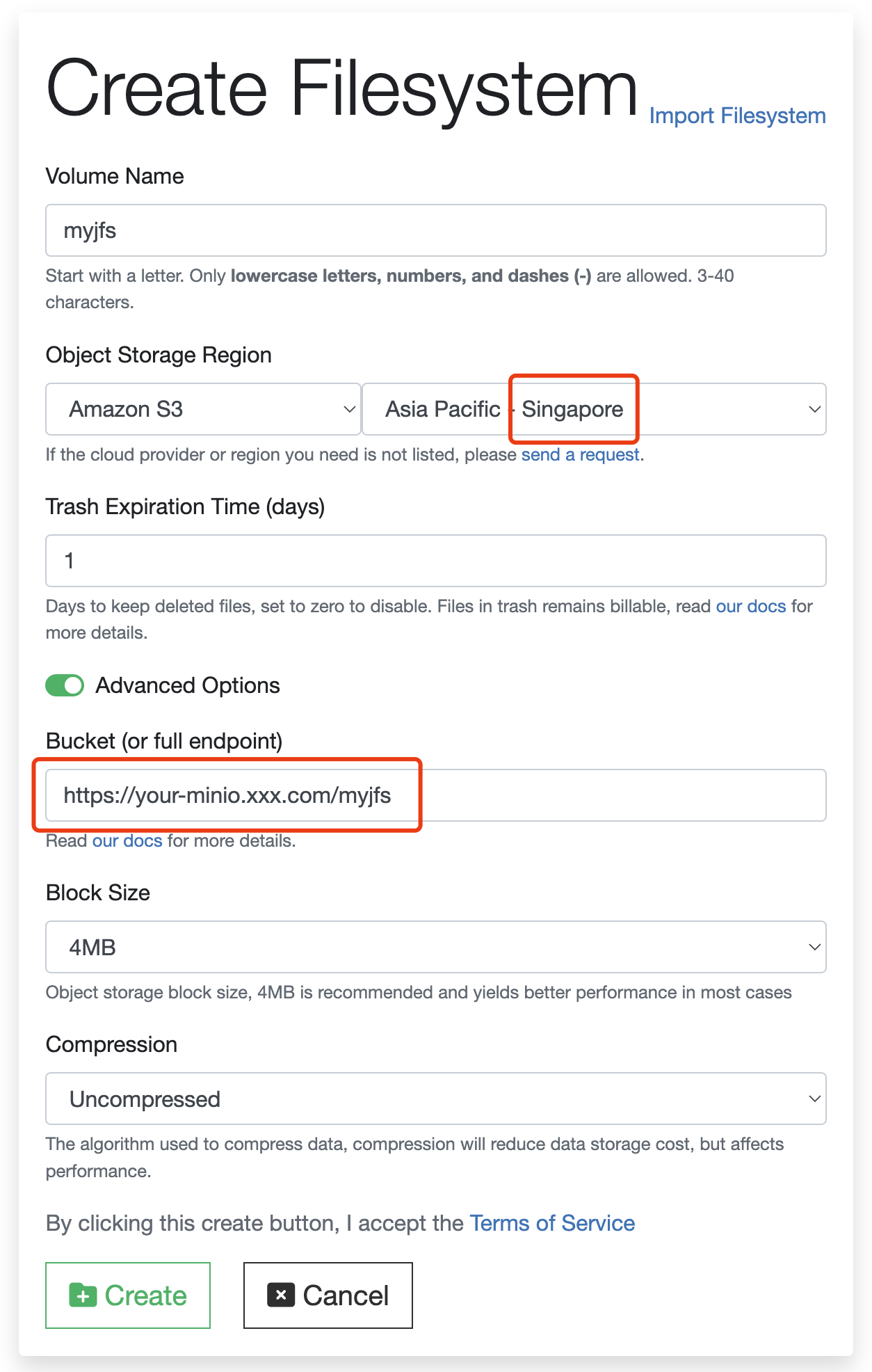

Usually a self-hosted object storage is used with on-premises JuiceFS deployment, but JuiceFS Cloud Service users can also work with self-hosted object storage when necessary. For example, to use MinIO in a data center in Singapore as a JuiceFS backend, follow these steps:

-

When you create a file system from the web console, note that Object Storage Region will not include MinIO. You can associate MinIO via either the S3-compatible API or the native MinIO SDK:

- If you want to use the S3-compatible API, choose AWS and select a region that provides the best latency between your application hosts (in this case, Asia Pacific - Singapore). The file system will be configured to use

s3as its storage type, using the MinIO S3 API instead of AWS S3. - If you prefer the native MinIO SDK, continue with the S3-compatible approach and contact a Juicedata engineer to modify the storage type later.

- If you want to use the S3-compatible API, choose AWS and select a region that provides the best latency between your application hosts (in this case, Asia Pacific - Singapore). The file system will be configured to use

-

Fill in the complete endpoint in the Bucket field, as shown in the following screenshot. If you prefer the native MinIO SDK for JuiceFS, please contact a Juicedata engineer for modification.

-

Click the Settings tab and follow the tutorial to install the JuiceFS Client in your host.

-

Prepare the MinIO credentials and follow Quick Start to mount the file system.